Customize Workflows with File to API Integration

Introduction

Traditional managed file transfer (MFT) solutions, whether on-premise or cloud based services, are typically good at one thing, performing the core file exchange from source to destination systems and transmitting raw or encrypted file data. Since MFT came of age in the mid 2000’s, the landscape has rapidly changed toward cloud hosted applications and IT infrastructure has become increasingly diverse and distributed. What that means is functional requirements are also evolving. There is more than the simple need to transfer unaltered files from source to destination systems. Now, the file transfer must perform in-transit processing on the file data itself as records, or events, distributed across your diverse and specialized SaaS and enterprise applications.

Microservices based API integration

RoboMQ MFT service allows you to transfer files between file systems, using a variety of standard file transfer protocols including FTP, SFTP, SCP, etc. over various file systems, S3, Object Stores, Cloud Storage etc. However, a door to unlimited possibilities opens by merging both the core file handling and processing, with API integration capabilities of the microservices based MFT solution.

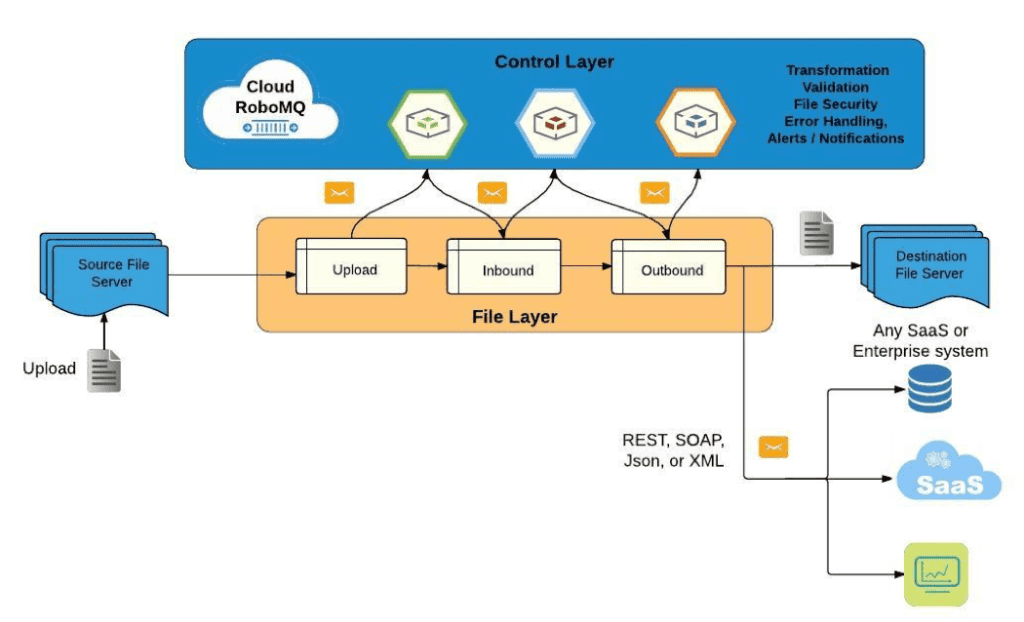

Fig 1: Customize Workflows with File to API Integration

Within the Control Layer, all file handling and processing tasks are performed by the RoboMQ functional components, microservices, depicted as hexagons in the figure above. These components can be assembled within a messaging fabric that together creates an event-driven MFT workflow that is robust, scalable, and reliable. Any number of tasks can be added within the workflow, for instance, file transformation, validation, encryption, or any custom task of choice. In addition, given the protocol agnostic capabilities of the RoboMQ platform, file processing can now expand away from conventional SFTP or SCP file transfer protocols to integrate with REST, SOAP, JSON or XML APIs. Below are just some of the countless integration use cases to scratch the surface.

File to event processing:

-

RoboMQ microservices offer a variety of ways to add event driven functionality into your workflow. Take for example, a CSV file, a simple file format used to store tabular data, commonly used by almost any business application. From that source file, we offer microservices with the ability to break each individual row of the file into records. Those records, in turn, are processed as events from that point forward throughout the workflow.

-

Log files are one use case, whether system or application logging, capturing events that may require reformatting, filtering, or alerting based on criticality (i.e. Errors, warnings, information), or trigger any action based on evaluating against known thresholds or alert conditions.

-

More advanced use cases may capture log events and feed to a machine learning or real time analytics engine to learn and identify threats and issues. See our March 2016 article for a deeper look at this use case, ThingsConnect suite of adapters and connectors, so that you can process events from any system in any protocol including MQTT, AMQP, SOAP, HTTP and REST services.

-

For example, HR or payroll processing solutions require retrieval employee identity and job related attributes. As many finance and human resources leaders including ADP and offer Workday® latest REST API integration technologies, workflows could be customized to query the resources and seamlessly aggregate into output files for transfer to Point Of Sale (POS) or HR management system.

-

There are many use cases for retrieval from accounting, sales reconciliation, or inventory tracking systems that would fit into this aggregation scenario. For example, throwing another microservice into the business workflow could trigger regularly scheduled queries from a customer relationship management (CRM) software. Any available cloud CRM service including Salesforce or Microsoft Dynamics CRM offer REST API access.

File to stream processing:

-

Another increasingly popular use case is stream processing. Given the pluggable broker support with RoboMQ, using Apache Kafka as a messaging transport is a great fit for stream processing apps. Events or data can be captured as a stream from a source file, then fed to other data systems such as relational databases, object stores or data warehouses.

-

Another increasingly popular use case is stream processing. Given the pluggable broker support with RoboMQ, using Apache Kafka as a messaging transport is a great fit for stream processing apps. Events or data can be captured as a stream from a source file, then fed to other data systems such as relational databases, object stores or data warehouses.

-

Or in some cases, file transfer over HTTP may simply be useful for uploading large files to a destination server. In all cases above where events need to be captured, for instance, changes to employee email address or any attributes as in the HR use case above, event handling microservices can be added into the workflow to update those attributes, or perform any action upon that event (see our employee life cycle management solutions here).

-

Additional validation microservices can be joined into the workflow, to flag and alert on any invalid or missing content. This can save HR managers and system administrators countless hours by no longer having to track down these errors. Finally, specific actions can be executed through services like Twilio that offer a REST API to make outgoing calls, or send SMS to a phone. APIs are also available for simply sending email or creating a case or a ticket in Salesforce, ServiceNow, or Jira.

In summary, RoboMQ is a middleware with no protocol of its own, but can support any protocol, including cross conversion among them. RoboMQ provides seamless integration of file based systems, SaaS and cloud systems, and diverse applications all done over a distributed system. Finally, being an enterprise grade MFT platform, all the management, error handling and recovery features are built in to provide reliable and secure file transfer.